In the current attention economy, digital content creators face an unprecedented challenge where visual saturation has led to a psychological phenomenon known as sensory browsing. Users often scroll through feeds with a level of detachment that renders standard marketing tactics ineffective, particularly when the accompanying audio feels disconnected or generic. This friction between high-quality visuals and mediocre, repetitive stock music creates a cognitive dissonance that encourages viewers to disengage or activate the mute button. To recapture this lost audience segments, professional creators are turning to a precision-tuned AI Music Generator to craft instant, original emotional hooks that align perfectly with their narrative intent. By moving beyond the limitations of static libraries, the AI Music Generator allows for the synthesis of audio that is mathematically designed to resonate with specific audience demographics in real-time.

The Psychological Correlation Between Auditory Precision And User Engagement Metrics

The human brain processes auditory information significantly faster than visual data, meaning the music of a video often dictates the viewer’s emotional response before they have even fully processed the first frame. When a soundtrack is specifically engineered to match the rhythmic pacing and emotional curve of a scene, user retention metrics tend to show a marked improvement. In my observations, the ability to generate a track that hits specific emotional beats—such as a sudden shift from suspense to relief—is what separates a viral piece of content from a forgotten one.

Traditional music sourcing often forces a compromise where the creator must edit their visuals to fit a pre-existing song. The shift toward generative audio reverses this power dynamic, allowing the music to be the adaptive element in the creative equation. This level of auditory precision is no longer a luxury reserved for high-end cinematic productions; it has become a baseline requirement for anyone looking to maintain a competitive edge on platforms where the first three seconds determine a video’s success or failure.

Strategic Deployment Of Neural Models For Specific Sensory Objectives

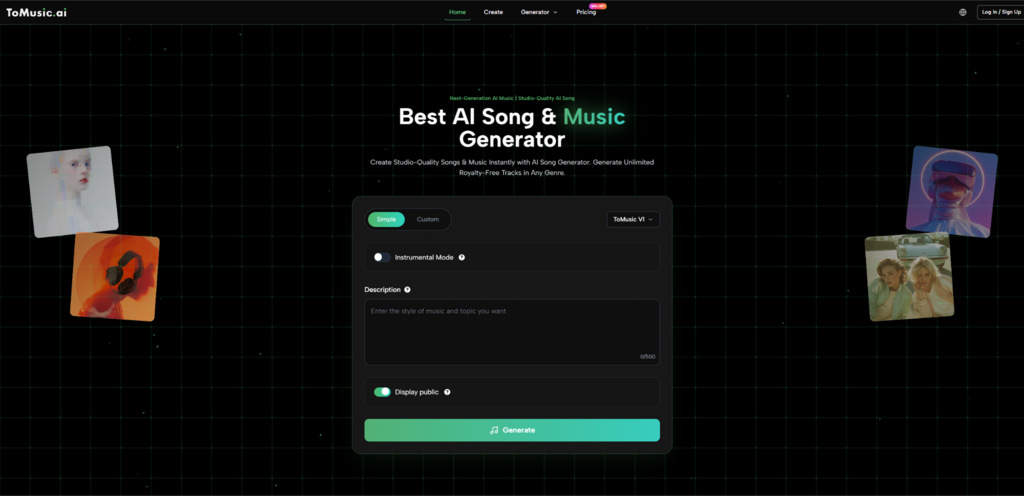

The architecture of modern generative platforms is increasingly modular, offering specialized engines that handle different facets of musicality. Understanding the specific strengths of these various models is essential for creators who need to produce a high volume of content without sacrificing the unique “soul” of each individual piece. Rather than relying on a singular algorithm, the system provides a suite of options that prioritize different technical outcomes.

Selecting The Optimal Processing Engine For Distinct Narrative Beats

Based on my testing, the selection of the underlying neural model serves as the foundation for the entire production process. For instance, the Studio Pro model appears to be the most robust choice for projects requiring a wide dynamic range and higher fidelity, making it ideal for content that will be consumed on high-quality audio systems. In contrast, the Express model seems to focus on the rapid blending of styles, which is perfect for creators who need to experiment with genre-fusing tracks that don’t fit into traditional categories. This specialized approach ensures that the generative process remains aligned with the intended delivery platform.

Calibrating Acoustic Intensity And Vocal Texture For Maximum Impact

Beyond the instrumental arrangement, the ability to fine-tune vocal characteristics is a significant development in neural audio. Whether the project requires a gritty, low-frequency narration style or a soaring, high-intensity pop vocal, the parameters can be adjusted to create a specific persona. The system’s ability to handle the nuances of human phrasing—such as the subtle pauses and emotional inflections—adds a layer of authenticity that was previously impossible to achieve with synthesized sound. This control over vocal texture allows brands to create a consistent “audio mascot” that becomes recognizable to their audience over time.

A Technical Comparison Of Generative Models For Diverse Content Requirements

To maximize the efficiency of the creative workflow, it is important to categorize the available models by their primary functional strengths. The following table provides a clear breakdown of how these different neural frameworks perform across key production metrics.

Iterative Workflow For Rapid Production Of Professional Grade Audio Tracks

The transition from a conceptual idea to a finished audio asset is managed through a streamlined three-step process designed to maximize creative output while minimizing technical friction. Following this official sequence is the most effective way to ensure consistent results.

-

Constructing the Auditory Prompt: The process begins with the user defining the core characteristics of the track in the input field. This involves describing the genre, mood, and specific instruments. For projects that include vocals, the user can either input their own lyrics or use the built-in generator to create a lyrical structure that matches the chosen theme.

-

Parameter Tuning and Model Selection: Once the creative intent is established, the user selects the most appropriate AI model and fine-tunes the atmospheric settings. This includes choosing from a library of 150+ styles and 30+ moods, as well as setting the tempo—slow, medium, or fast—to ensure the rhythm of the track complements the visual pacing of the project.

-

Synthesis and Final Asset Management: After confirming the settings, the system generates the track, which can be previewed immediately. Users can assess the quality of the composition and the vocal clarity before saving the final file to their library. This allows for easy organization and the ability to revisit successful prompt structures for future iterations.

Evaluating The Long Term Stability Of Generative Audio Assets

The long-term value of moving to a generative model lies in the creation of a proprietary audio library that is entirely free from third-party licensing risks. In an era where copyright algorithms are becoming increasingly aggressive, having total ownership over every note in a soundtrack provides a level of legal security that traditional libraries cannot match. This allows for the scaling of content across multiple platforms and international borders without the fear of sudden takedown notices or royalty disputes.

Navigating The Technical Constraints Of Neural Music Composition Systems

While the capabilities of the AI are expansive, it is important for creators to manage their expectations regarding the nature of generative output. In my experience, the system performs best when given specific, multi-layered prompts rather than vague instructions. There is a learning curve associated with understanding how certain keywords interact within the neural network. Sometimes, achieving a perfect result requires generating two or three variations of the same prompt to find the one that truly captures the intended atmospheric nuance. This iterative approach is a natural part of the collaboration between human creativity and algorithmic execution.

Establishing A Standardized Library For Multi Platform Brand Consistency

For professional teams, the ability to store and organize generated assets within a centralized cloud library is a critical feature for maintaining brand consistency. By documenting the specific models, moods, and styles used for successful campaigns, a brand can build a “sonic style guide” that ensures all future audio content feels like part of the same ecosystem. This systematic approach to audio production reduces the time spent on decision-making and ensures that even high-volume content streams maintain a high standard of professional quality.

The future of digital media is increasingly defined by the ability to provide a hyper-personalized experience for the viewer. As the tools for visual and auditory generation continue to merge, the creators who can master the art of precision sound design will be the ones who define the next generation of digital storytelling. By utilizing a platform that offers both speed and sophisticated control, creators are no longer just producing content; they are architecting complete sensory environments that captivate and retain their audience’s attention in a crowded marketplace.

Email your news TIPS to Editor@Kahawatungu.com — this is our only official communication channel