The Variable Pipeline: Optimizing AI Video Iteration for Performance Ads

Optimizing AI Video Iteration for Performance Ads

Performance marketing has long relied on the “volume-plus-variation” model. In the era of static images, this meant swapping a background color or a CTA button and running a split test. Video content, however, remained the bottleneck. The cost and time required to produce a dozen high-quality video variants traditionally made high-tempo iteration impossible for most lean teams. The introduction of the AI Video Generator has fundamentally shifted this math, moving video from a high-friction asset to a variable component of the agile creative stack.

However, simply having access to these tools does not equate to a successful ad campaign. The difference between a high-converting video and one that users scroll past lies in the refinement of the pipeline—specifically, how an operator manages prompts, source assets, and the iteration loop to ensure output quality meets brand standards.

The Shift from Descriptive to Technical Prompting

Early adopters of generative tools often approach them with a “slot machine” mentality: input a few descriptive words, pull the lever, and hope for a usable clip. In a commercial environment where ROAS (Return on Ad Spend) is the primary metric, this randomness is a liability.

To gain control over an AI Video Generator, the prompting strategy must evolve from creative prose to technical instruction. Instead of asking for “a woman drinking coffee in a sunlit kitchen,” an operator-led prompt focuses on the cinematic parameters that influence the viewer’s subconscious. This includes specifying lens focal lengths (e.g., “35mm wide shot”), lighting types (e.g., “high-key natural morning light”), and specific camera movements (e.g., “slow dolly-in on the subject’s face”).

One persistent limitation in current models is the difficulty of translating complex abstract concepts into literal pixels. For example, asking for “reliability” or “fast-acting relief” often results in nonsensical visual metaphors. Performance marketers find more success by prompting for the visual indicators of those qualities—steadiness, sharp focus, and rapid, purposeful movement.

Source Assets: The Anchor for Temporal Consistency

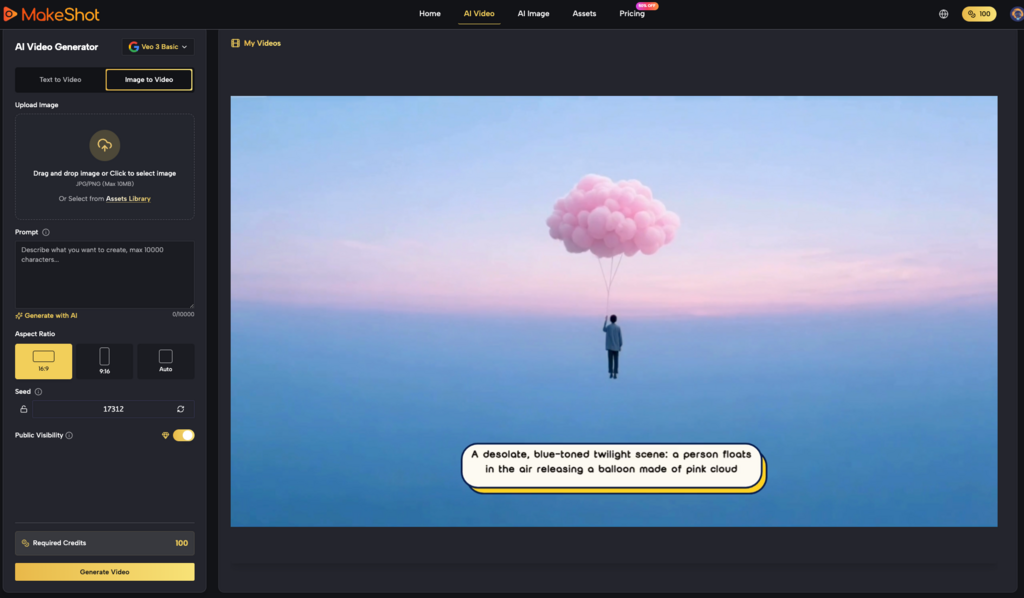

One of the most effective ways to stabilize the output of an AI Video Generator is to utilize a source image rather than relying solely on text-to-video. In an image-to-video workflow, the starting frame provides the model with a fixed reference for color palette, character likeness, and product geometry.

For ad creative, this is vital for brand safety. If a performance team is testing variations of a hero product, they cannot afford for the product’s label or shape to morph mid-clip. By using a high-fidelity rendering or a professional photograph as the seed asset, the AI’s primary task shifts from “imagining everything” to “animating the existing.”

However, it is important to reset expectations regarding physics. While modern models are excellent at simulating fluid motion and simple physics like wind in hair or steam rising from a cup, they frequently struggle with complex interactions, such as hands holding objects or the intricate mechanics of a folding device. When the source asset is too complex, the resulting video often suffers from “melting” artifacts, where the AI cannot reconcile the static image’s structure with the requested movement.

Structuring the Iteration Loop

In a systems-minded workflow, iteration is not about luck; it is about variable isolation. When a generated clip fails to meet the mark, the operator must identify which of the three pillars failed: the prompt, the seed asset, or the model’s internal motion parameters.

- The Motion Strength Pivot

Many platforms allow users to adjust a motion slider. A common mistake is cranking this to the maximum to get “more action.” In practice, high motion often leads to a breakdown in temporal coherence—the video begins to flicker or the subject deforms. For professional-grade ads, a lower motion setting (30% to 50%) often yields a more stable, cinematic result that can be further enhanced in post-production with speed ramping.

- The Aspect Ratio Constraint

Performance ads are rarely one-size-fits-all. A 9:16 vertical video for TikTok requires a different compositional logic than a 1:1 Instagram square. Using an AI Video Generator that understands these native ratios is crucial. Iterating across ratios often requires adjusting the prompt to ensure the “action” remains in the center of the frame, avoiding the loss of key visual hooks when the model crops the canvas.

- Negative Prompting as a Filter

Just as important as what you want is what you want to avoid. Performance marketers should build a library of “negative keywords”—terms like “blurry,” “distorted anatomy,” “low resolution,” and “cartoonish”—to narrow the model’s output range. This reduces the number of “wasted” generations and increases the throughput of usable assets.

Practical Evaluation: Beyond the ‘Cool’ Factor

The excitement of seeing a static image come to life can often cloud a creator’s judgment. For an AI Video Generator output to be commercially viable, it must pass a “vibe check” that is grounded in E-E-A-T principles (Experience, Expertise, Authoritativeness, and Trustworthiness).

Does the motion look “uncanny”? Humans are exceptionally sensitive to unnatural biological movement. If a character’s eyes blink out of sync or their skin texture smooths over during a transition, the viewer’s trust is immediately eroded. In the context of a performance ad, this translates to lower click-through rates.

Marketers must be willing to discard clips that look “cool” but feel “fake.” It is often better to use a shorter, two-second clip of perfect motion and loop it, rather than a six-second clip that degrades into artifacts by the fourth second. At this stage of the technology, there is a clear trade-off between clip duration and visual integrity.

Managing the Production Pipeline at Scale

For a team producing hundreds of ads per month, the AI Video Generator becomes an engine that feeds the creative testing machine. The workflow usually follows a tiered structure:

- Tier 1: The Seed Phase. Create 5-10 high-quality static “concept” images using a tool like the MakeShot image generator.

- Tier 2: The Animation Phase. Run these images through the video generator using standardized motion prompts (e.g., “The Pan,” “The Zoom,” “The Subtle Pulse”).

- Tier 3: The Selection Phase. An editor reviews the batches, selecting the top 10% that exhibit the least amount of “AI drift.”

- Tier 4: The Finishing Phase. These clips are brought into a traditional NLE (Non-Linear Editor) for color grading, text overlays, and audio syncing.

This hybrid approach acknowledges that while AI is excellent at generating raw visual data, it still lacks the intentionality required for a final, polished ad. The “expert” in the loop provides the judgment that the algorithm cannot.

The Commercial Reality of Generative Video

We are currently in a phase of rapid development where what was impossible six months ago is now a standard feature. However, the commercial application of an AI Video Generator is still hampered by certain realities. Copyright and trademark consistency remain gray areas; you cannot reliably generate a clip of a specific, trademarked sneaker or a licensed character without significant post-production retouching or specialized training (LoRAs).

Furthermore, the “cost per generation” must be weighed against the potential lift in ad performance. If a team spends 50 credits to get one usable three-second clip, that clip needs to contribute to a significant increase in conversion rates to justify the overhead of the operator’s time.

Building a Repeatable Asset Library

The ultimate goal for a performance marketer using an AI Video Generator is to build a library of “winning” motion patterns. Over time, you will notice that certain camera angles combined with specific subjects consistently yield high-retention clips.

By documenting these successes—treating prompts like code—teams can reduce the time-to-market for new campaigns. Instead of starting from scratch, they can deploy a “proven” motion prompt against a new set of product photos. This level of systematization is what separates the hobbyist from the performance professional.

Conclusion: The Future of the Agile Media Stack

The democratization of video production through generative tools is not about replacing film crews; it is about expanding the boundaries of what is testable. By utilizing an AI Video Generator as a variable-driven component of the marketing pipeline, brands can move at the speed of social trends and platform algorithms.

Success in this space requires a disciplined approach to inputs, a skeptical eye for quality, and a commitment to the iteration loop. As the models improve, the “uncanny valley” will narrow, but the need for human-led strategic direction will remain the most critical variable in the entire pipeline. The focus should always be on how the motion serves the message, rather than the novelty of the technology itself.